Overview

This one fact is unbelievable: According to an article in Science Daily in 2013, “90% of the worlds data was created in the last 2 years”. In 2017, the numbers continue to rise. Think about that – the amount of data generated via social media is staggering – 4 Million text messages are sent each minute within the US alone – mind blowing! With that amount of data, we are forced to think differently about how to process, store and analyze all of this data. With massive amounts of data being generated in today’s society, organizations are embarking on data modernization efforts to not only update their current capabilities, but to provide new processing and storage capabilities for the staggering amount of data that is generated today! In reality, processing and storing the data is actually the easy part, the tough issues still remain and need to be addressed, such as data governance, data quality, metadata and master data management. Actually when you think about it, it is probably even more important to focus on the basics in today’s world. More data with data quality issues only creates more challenge. Even if we can store the data but can’t analyze it, then we haven’t achieved anything. This white paper will not only present various things to consider when modernizing your data, but also what are the basics that you still need to focus on when evolving your data infrastructure.

Data Modernization

While we are drowning in data – going back to the fact that 90% of the world’s data has been generated in the last 2 years, according to Forbes, less than 0.5% is actually analyzed. In order to analyze the data, you first have to be able to process and store it. We understand that many companies and agencies have already made significant investments in their data infrastructure. Enterprise Data Warehouses are so common place today-many have been reaping the benefits of integrated data environments for years….so now what? Do we lose that sunk cost and throw everything out to move to new technology? You don’t have to lose your existing investments, actually you should maximize them by modernizing where needed and then deploying new capabilities that provide seamless integration. The legacy data infrastructure still plays a critical role in an enterprise data architecture, the task at hand is how to integrate the legacy and modern infrastructure to create best value for your organization. Here are some basic items to consider when focused on modernization:

- Think differently about Data Integration: The world of data integration has evolved over the years. Look to enhance your extract, transform & load (ETL) processes by upgrading to real-time data services, optimize your integration techniques by looking at where it makes sense to perform the integration, either at the analytic layer or operational layer.

- Open up your Environments – Focus on Exploration: Provide the capabilities for your business users to research, analyze and play with the data-allow new tools and capabilities to provide sandboxes where data can be explored.

- Chose Federation over Centralization: with the vast amounts of data – it is not efficient to have everything centralized-look for opportunities to keep the data stored where it is and integrate using data virtualization techniques.

- Use Open Source Tools: look for opportunities where you can augment your existing data technology stack with open source tools. The open source community provides a powerful set of tools to not only ingest and pre-process the data, but also to gain insight and explore the data.

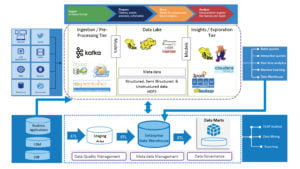

At Unissant, we believe it is important to integrate both your legacy infrastructure, depicted at the bottom of this diagram, along with your new data tools and technologies. Understanding the role that your legacy data infrastructure plays in the overall approach to your enterprise data architecture is critical for success.

Modernizing your existing architecture will take time & effort, but the return on investment will be significant and you will be well poised for the future wave of data coming your way. It is also prudent to incorporate data modernization efforts into your organizations IT Strategy, where the existing capabilities can be reviewed against new technology to make any adjustments on a consistent basis. The data management world is continually evolving and it will be critical to stay on top of the trends and capabilities.

Having said that, while just as it is important to stay on top of the latest trends and capabilities, it is also critically important to not lose sight of the basics in data management. Data Management is a discipline that encompasses a wide variety to capabilities, such as, data integration, data quality, data governance, metadata management, master data management, data security and data architecture. All of these disciplines are tightly integrated and many are dependent on one another. The challenge has always been and still remains, ensuring there is demonstrated value in performing these disciplines and ensuring that they align with the business goals of the organization. In most cases, they are an “after-thought”.

Focus on the Basics

It is important, particularly with the vast amount and variety of data being generated today, that we not lose sight of these very basic data management capabilities that will be even more important as the data world continues to evolve.

- Stay focused on Data Accountability: what is not changing in today’s world is the amount of regulation and scrutiny being placed on an organization’s data. Staying focused on the basic principles of data governance across your entire data ecosystem will become critically important to address targeted questions. You need to be able to understand not only what, when and how data is transformed across your legacy environment, but also, how it is ingested, processed, stored and analyzed in your new data environments. Being able to bridge across your legacy data warehouse and your Hadoop ecosystem will remain important – establishing accountability at the data process/subject/element level will provide for full transparency-regardless of environment.

- Implement “Fit for Purpose” Data Quality: not all data is treated equally, so ensure that your data quality program has various levels of data grading implemented. It is important to understand how the data is being consumed from the various data environments so that you can ensure the right level of data quality control is established.

- Implement End-to-End Metadata: ensure that all facets of metadata, business, operational and technical, are being captured across all legacy and new data environments-ensuring traceability and lineage.

All disciplines associated with managing data are vital to ensure that the data is of highest quality, is consistent, is understood and most importantly is of value to your business.

Conclusion

As you embark on the adoption of new data tools & technologies or on modernization efforts associated with your existing data environments, just remember that it all comes back to a simple concept: you need to trust the data in order to find its value. In order to trust the data, you need to understand the basics, such as, how is it defined? how is it used? what is the quality associated with the data? how does it travel across our data environments? is it data that needs to be secured? And the list of questions goes on – bottom line is stay focused on the basics as you modernize.